In the end I actually don't even care so much if this will really change

things fundamentally. But since yesterday I'm having a couple of ideas

on how I could get back into writing more regularly again. Because if I'm

writing more, I'm reflecting more on things. And if I'm reflecting on

things, I'm usually taking much much better care of myself.

So for now I've decided to simply enjoy the excitement that comes with

the newly acquired inspiration and aim on using the momentum to

initialize a small reboot (simply adjusting a couple of things, no revolitionary steps planned. for now).

Let's see.

This post describes how to setup a static website on wasabi.com. It is basically a paraphrased version of this support document, BUT with a couple updates and some clues that took me some time to find out.

Wasabi is a Cloud Object Storage, that aims to 100% compatible to Amazon's S3 - while being significantly cheaper. And they are! I'm running my Seafile server with a Wasabi backend and not only does it work seamlessly, it's also only costs a friction of what I've paid at AWS.

In an effort of consolidating services a bit more, I've started looking into ways, on how to use Wasabi for hosting my static websites as well. It's not that these are incredibly expensive on AWS (this would require them to at least have some sort of traffic), but I like to have things not spread across too many services, especially if something works that fine for me.

After having no real no backups of my private infrastructure for quite some time, a colleague recommended backuppc. It may look like coming directly from the 90's, but it is so straight forward in what it does that it single-handedly smashed all my half-assed-not-fully-thought-through bash scripts to pieces.

Basically it does exactly what my half-assed-not-fully-thought-through bash scripts tried to do as well: rsync directories from several machines to a central place, where it gets stored incrementally. – Only that this one works.

Unfortunately the install routine is completely messed up and after I've been through that a couple of times, I've decided to document it here, so that I know where to find it next time.

This is just a quick summary of the sessions I‘ve visited at 36c3. So many things have happened during those two days, so that this is merely a reminder for me, in order not to forget everything.

This post covers day 1 (2019-12-27). There‘s also a post where I‘m covering my second day.

Link zur Vortragswebsite

No question, the web and search in particular have learned a lot of new tricks over years. It can do more things faster. – And it even comes with me (as long as it is not the U-Bahn in Berlin).

Unfortunately its nature has changed as well, which is most visible when looking at search these days.

A search result today is focused on offering services.

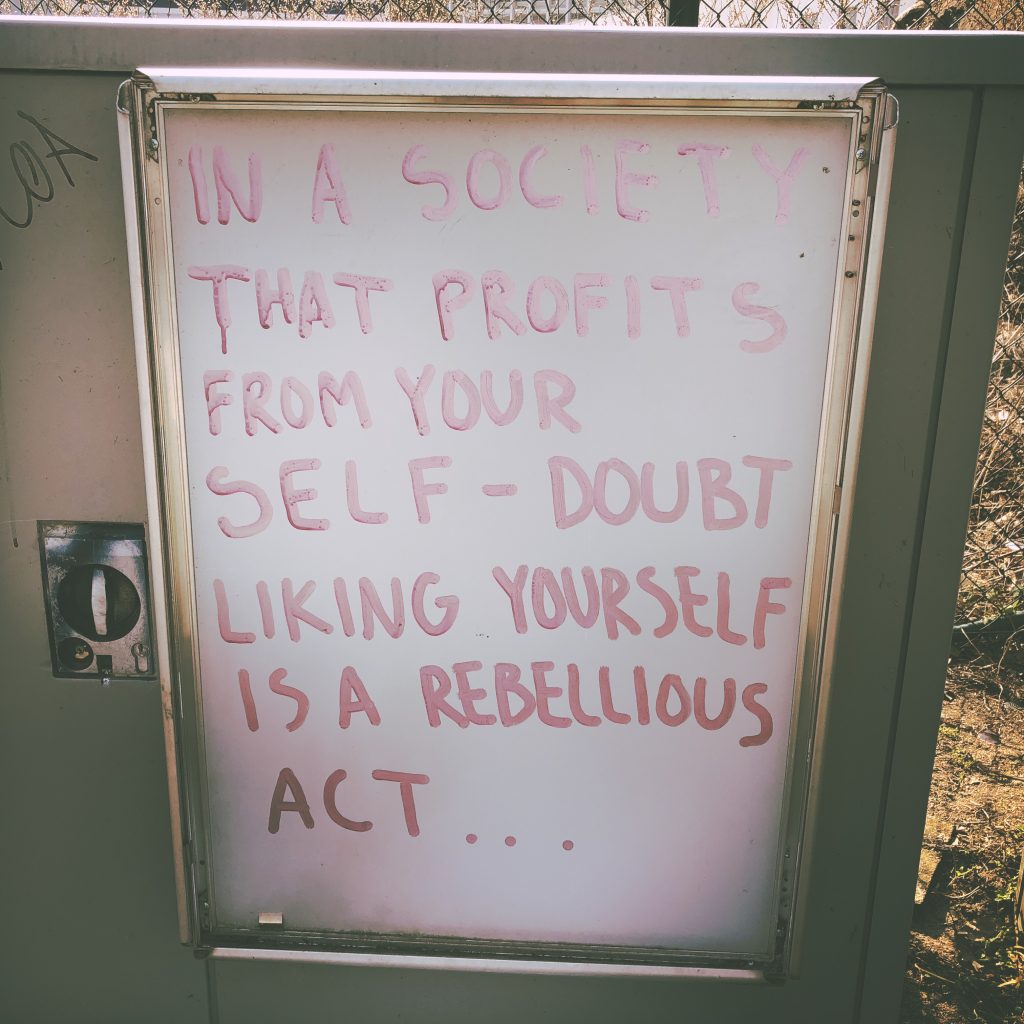

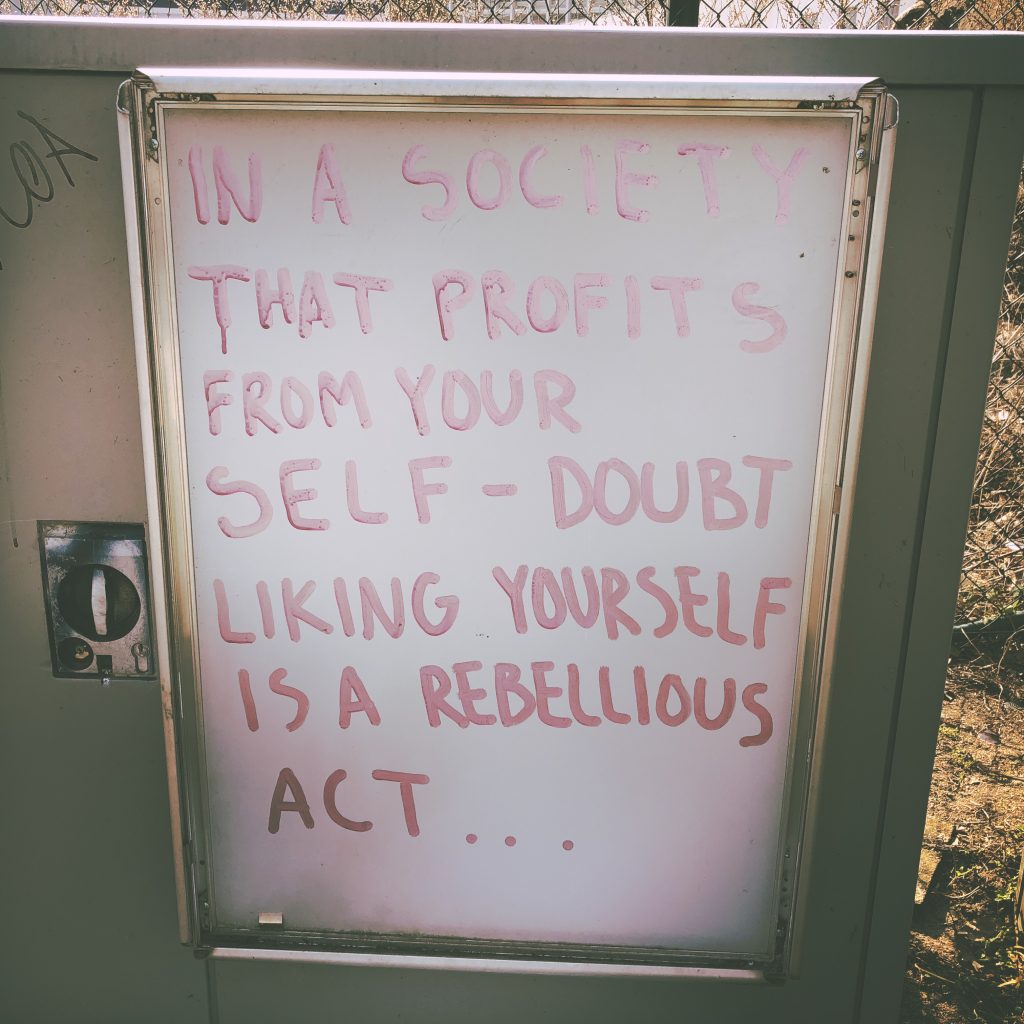

Liking yourself is a rebellious act

Found this on my way back home.

Yes, it's exactly as cold and ugly, as you'd think.

To be honest, sometimes I miss Vienna. Especially when it's almost April and Berlin still gives you nothing but snow, rain and grey sky all day long. sigh

My current tasks regularly involve fiddling around with the live website of a rather large eCommerce venture. Sometimes I need to review or research the behaviour of a third party script, sometimes it’s about analyzing timing or writing automated tests or implementing crazy A/B Tests.

To make things a little bit more interesting, neither do I have access to the code, nor to a testing environment, so I cannot inject JavaScript or play around with the templates in order to add my tests. So I’ve built myself a little toolset, that allows me to do a lot of fancy things, without knowing anything more than what the website is showing to the outside world (which turns out to be quite a lot).

Please note, all this is, of course, limited to the local browser and it’s not always perfect when it comes to timing and sync vs. async. operations, but still...!

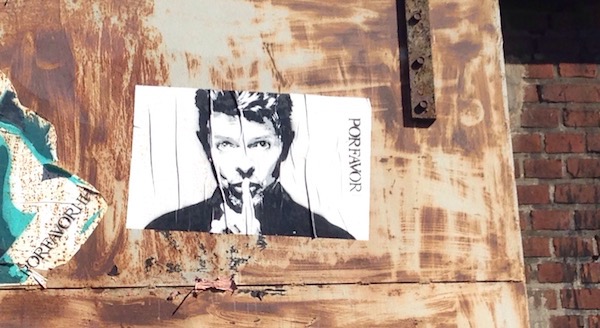

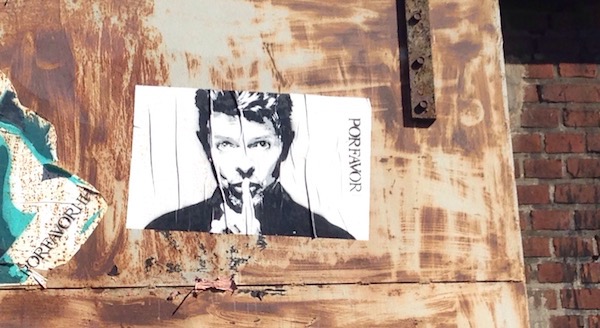

During my stay in Bologna this summer, I had the chance to see the amazing "David Bowie is..." exhibition at MAMbo and I must say, it impressed me. Deeply!

Coming from a completely different background and time, Bowie was never really on my radar. So it may not be surprising that it literally blew my mind to get a glimpse of the holistic vision this man had as an artist. There may be parts of his oeuvre that are still not my cup of tea, but two particular aspects of Bowie's life and art definitely resonated with me:

Wow!

I originally found this quote on FATPOD41 and decided to immediately like it a lot. Feels to me like it was kind of relevant atm.

And isn't that a minor miracle? State of the world today and the level of conflict and misunderstanding. That two men could stand on a lonely road in winter and talk. Calmly and rationally. While all around them, people are losing their minds. #Fargo

Fortunately there is a simple solution for my little issue: an article on Wikipedia.

This article contains a List of Piano Composers of various epochs and especially the list of composers of the 20th century holds lots of great musicians I have never heard of!

Mikalojus Konstantinas Čiurlionis, for example, a Lithuanian painter, composer and writer, whose music is right now on my playlist.

This simple insight got me thinking. I love getting excited about the latest thing, that just came to my mind. But rarely do I manage to sit down and execute it.

Most of the time, that doesn't really bother me. The next idea is just around the corner and by jumping ship and taking on the next awesome idea, the excitement never stops.

This creates something like a sea of potential chances. And it feels great, to swim through it, knowing that I could implement any of them, if I wanted to.